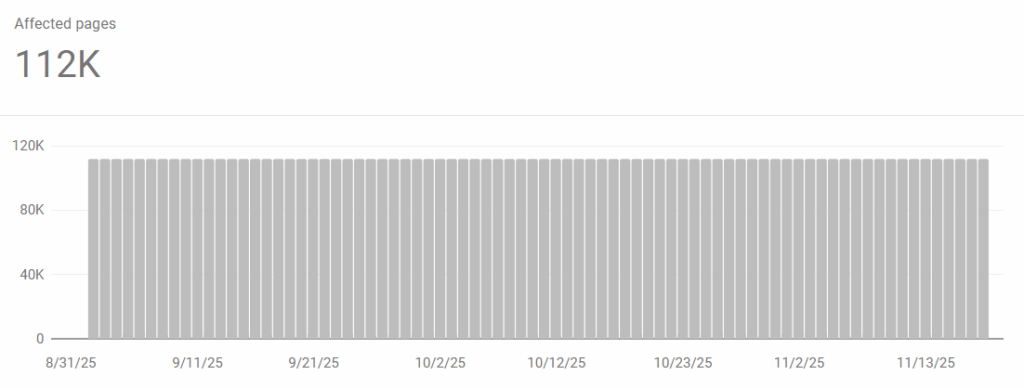

If you manage large websites, dealing with Google Search Console crawl errors can be a nightmare. You expect to see green “Indexed” bars, but instead, you are greeted by thousands of URLs flagged as “Blocked due to access forbidden (403)” or “Blocked by robots.txt.”

Google tells you that there is an error, but it rarely tells you why.

Is it a plugin? A firewall? A bad line of code?

Here is how to debug the top 3 crawling errors developers face, and a tool I built to diagnose them instantly.

1. Blocked due to access forbidden (403)

This is often the most confusing error. You click the URL, it opens fine in your browser (200 OK), but Googlebot gets a 403.

The Cause: This is rarely an SEO setting. It is usually a Web Application Firewall (WAF) issue. Security tools like Cloudflare, Wordfence, Akamai, or server-level iptables rules are likely blocking the specific User-Agent or IP range of Googlebot, thinking it’s a scraper or an attack.

The Fix: You need to verify if the server specifically hates Googlebot.

- Go to CrawlerCheck.com.

- EEnter the URL throwing the error and click Check Crawlers.

- Scroll down to the “Google Bots” section.

If CrawlerCheck shows a 403 Forbidden or Blocked status for Googlebot but you can visit the page normally in your browser (a 200 OK for a standard browser user), you have confirmed the issue: your server’s firewall is blocking Google’s crawler and you need to whitelist Google’s User-Agent in your WAF settings.

2. Blocked by robots.txt

This is the “Polite Block.” Googlebot read your robots.txt file, saw a Disallow rule, and stopped.

The Problem: On complex sites (especially Magento, WordPress, or custom JS apps), robots.txt files can get messy. You might have a rule like Disallow: /api/ that unintentionally blocks /api-guide/.

The Fix: Don’t guess which line is the culprit.

- Paste the URL into CrawlerCheck.

- The tool parses your live

robots.txtfile. - It highlights the exact line number and rule that is triggering the block.

3. Excluded by ‘noindex’ tag

This status means Google accessed the page, but you explicitly told it not to index it.

The Trap: Sometimes you check the HTML source code and don’t see a <meta name="robots" content="noindex"> tag. So why is Google ignoring it?

It’s likely hidden in the HTTP Headers (X-Robots-Tag). Plugins and server configs can inject noindex headers that are invisible in the page source code but visible to bots.

The Fix: Use a header inspector or simply run it through CrawlerCheck. The tool scans both the HTML Meta Tags and the HTTP Response Headers to find hidden noindex directives that browser “View Source” might miss.

Summary

Search Console is great for reporting problems, but bad at diagnosing them. If you are seeing crawl errors, stop guessing and start simulating the bot.